When I first started exploring the world of AI accelerators, I found it helpful to read a set of well-written papers and project reports. They introduced me to key ideas such as roofline models, blocking strategies, dataflow mapping, sparsity, mixed precision, and real chip designs. This list is organized by publication date, with short notes [...]

Multiprocessors and Thread-Level Parallelism Chapter 5 in Computer Architecture A Quantitative Approach (6th) by Hennessy and Patterson (2017) Introduction The increased importance of multiprocessing reflects several major factors: Inefficiency of adding more instruction-level parallelism? \(\rightarrow\) Multiprocessing = Only scalable and general-purpose way! A growing interest in high-end servers as cloud computing and software-as-a-service A growth in data-intensive applications Increasing performance on the desktop is [...]

Data-Level Parallelism in Vector, SIMD, and GPU Architectures In this post, I summarize Chapter 4 in Computer Architecture A Quantitative Approach (6th) by Hennessy and Patterson (2017) Introduction Single instruction multiple data (SIMD) classification was proposed by Flynn, 1966 A question for the SIMD architecture: How wide a set of applications has significant data-level parallelism (DLP)? Matrix-oriented computations of [...]

Instruction-Level Parallelism and Its Exploitation In this post, I summarize Chapter 3 in Computer Architecture A Quantitative Approach (6th) by Hennessy and Patterson (2017) Instruction-Level Parallelism: Concepts and Challenges Instruction-level parallelism (ILP) All processors since about 1985 \(\rightarrow\) Pipelining to overlap the instruction execution Appendix C: Basic pipelining in “Pipelining Basic and Intermediate Concepts” This chapter [...]

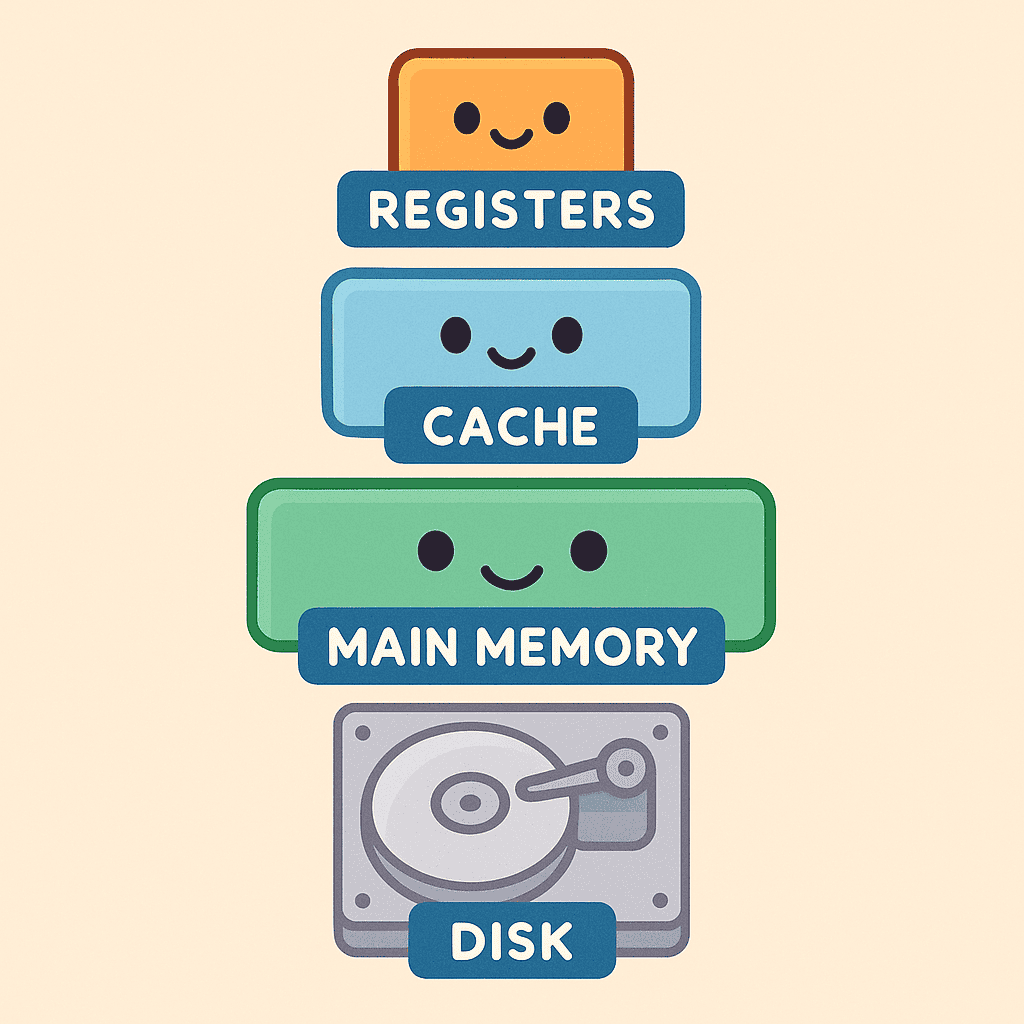

“Ideally one would desire an indefinitely large memory capacity such that any particular… word would be immediately available… We are… forced to recognize the possibility of constructing a hierarchy of memories each of which has greater capacity than the preceding but which is less quickly accessible.” – A. W. Burks, H. H. Goldstine, and J. von Neumann, Preliminary Discussion [...]

“Ideally one would desire an indefinitely large memory capacity such that any particular… word would be immediately available… We are… forced to recognize the possibility of constructing a hierarchy of memories each of which has greater capacity than the preceding but which is less quickly accessible.” – A. W. Burks, H. H. Goldstine, and J. von Neumann, Preliminary Discussion [...]

Why “Computer Architecture: A Quantitative Approach”? When I first started studying computer architecture, I relied on textbooks like Computer Organization and Design (Patterson & Hennessy) and Digital Design and Computer Architecture (Harris & Harris) to build a solid foundation in instruction-set architecture and microprocessor basics. While these books were incredibly helpful for understanding the fundamentals, [...]